Key Features

Everything you need to work with LLMs, nothing you don't.

Workspace

Work directly on your filesystem. Support vibe coding, notebooks, knowledge bases, or notebook vaults.

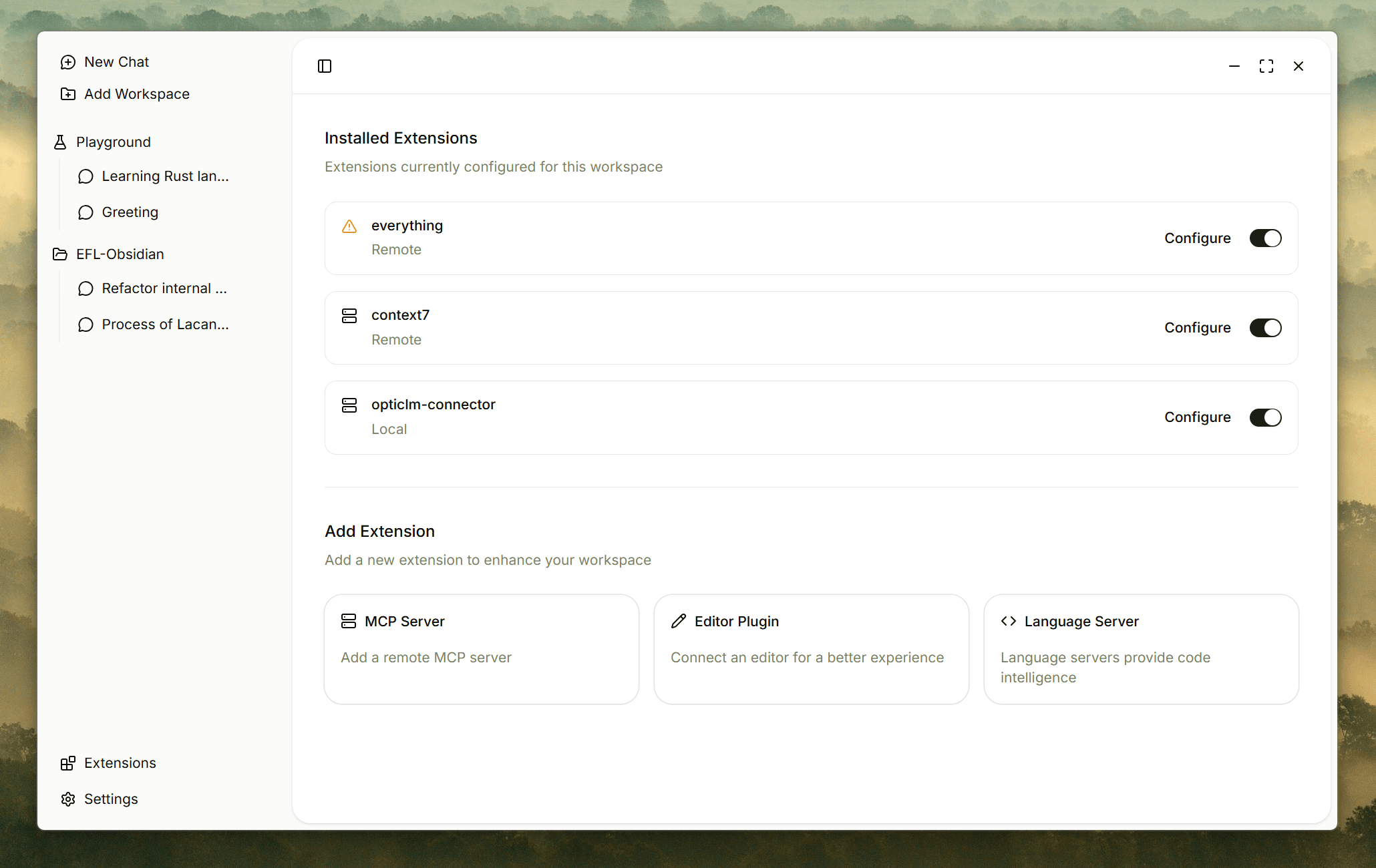

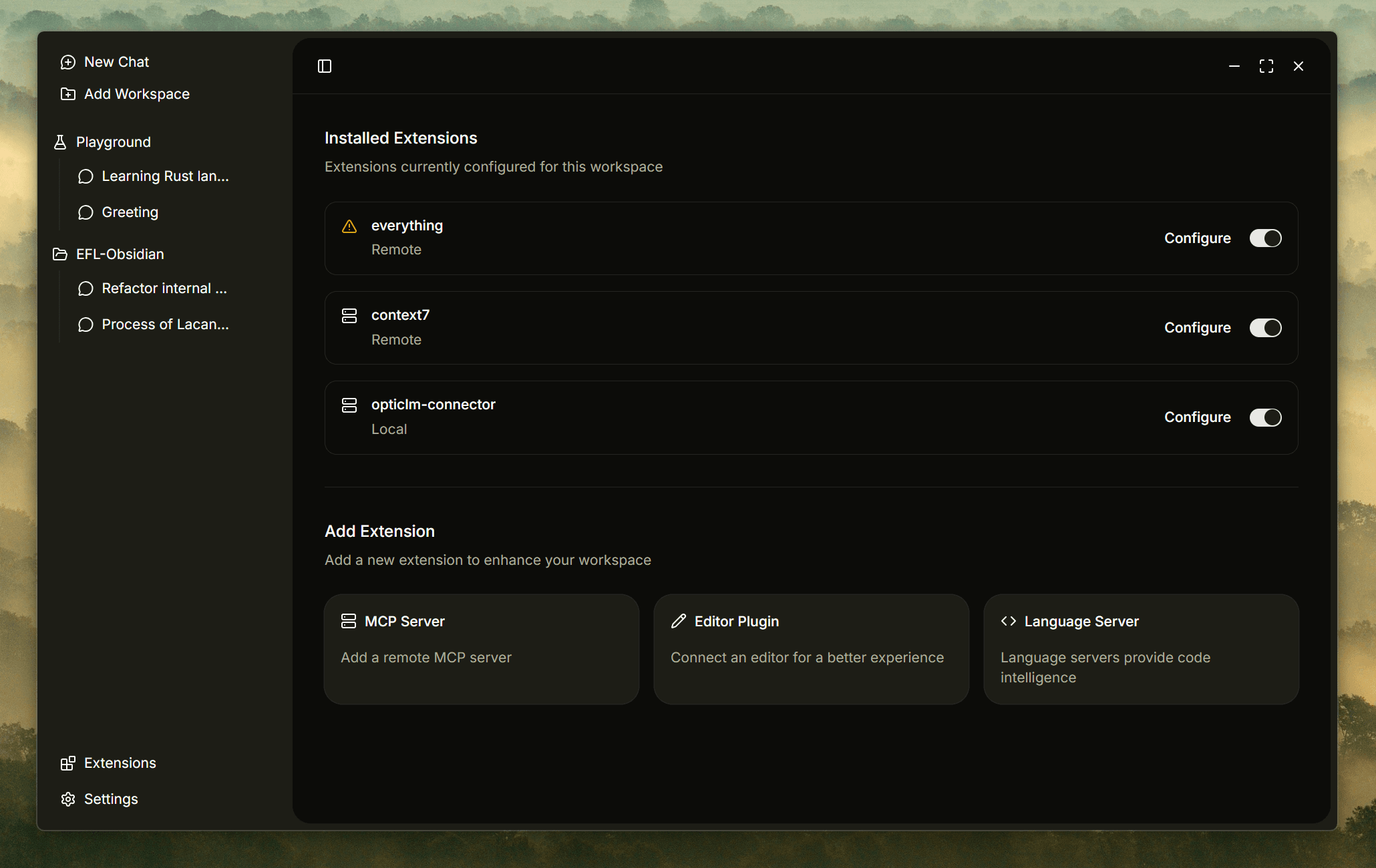

Extension

Connect MCP servers, integrate with VSCode, Obsidian, and LSP for a seamless workflow.

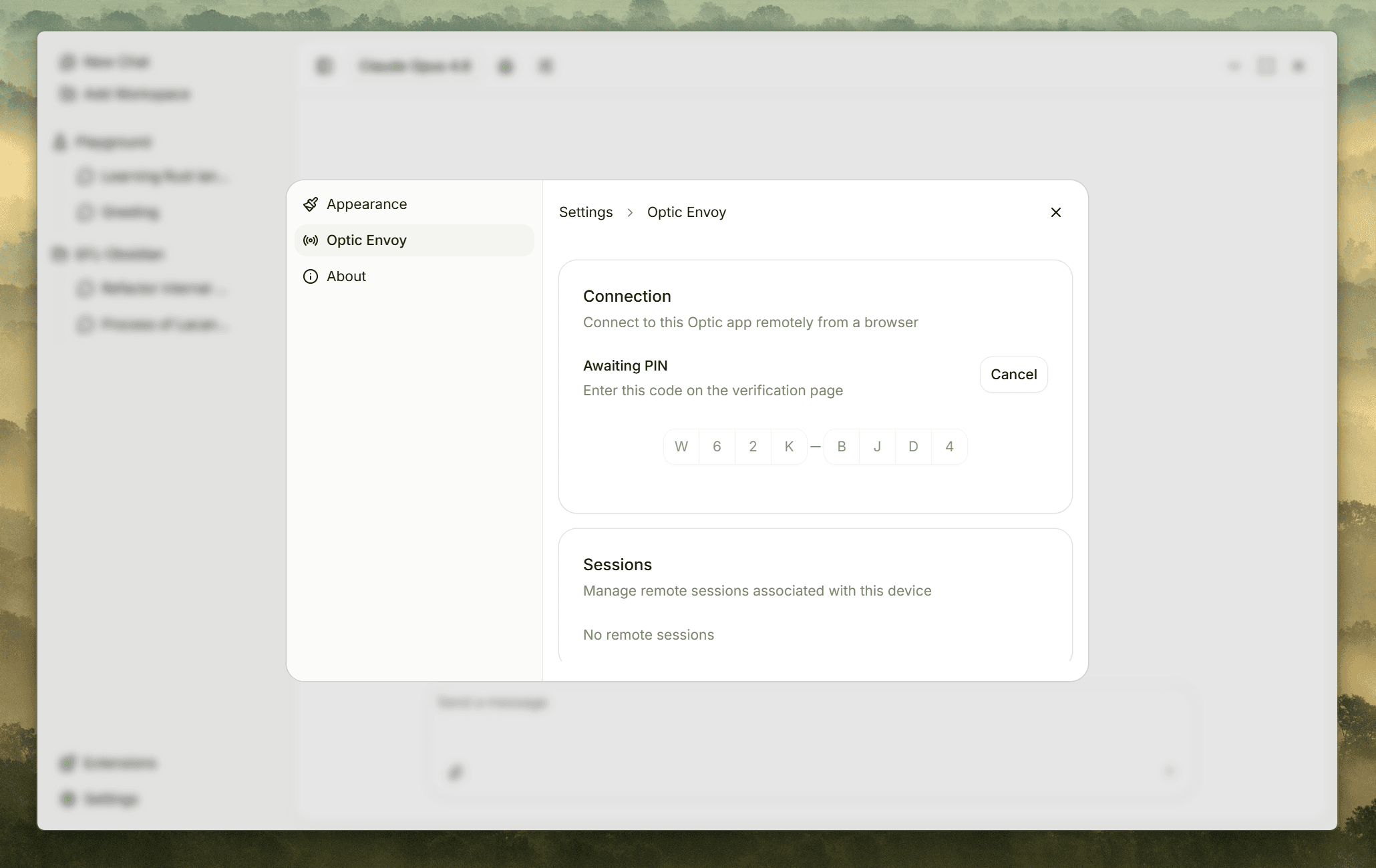

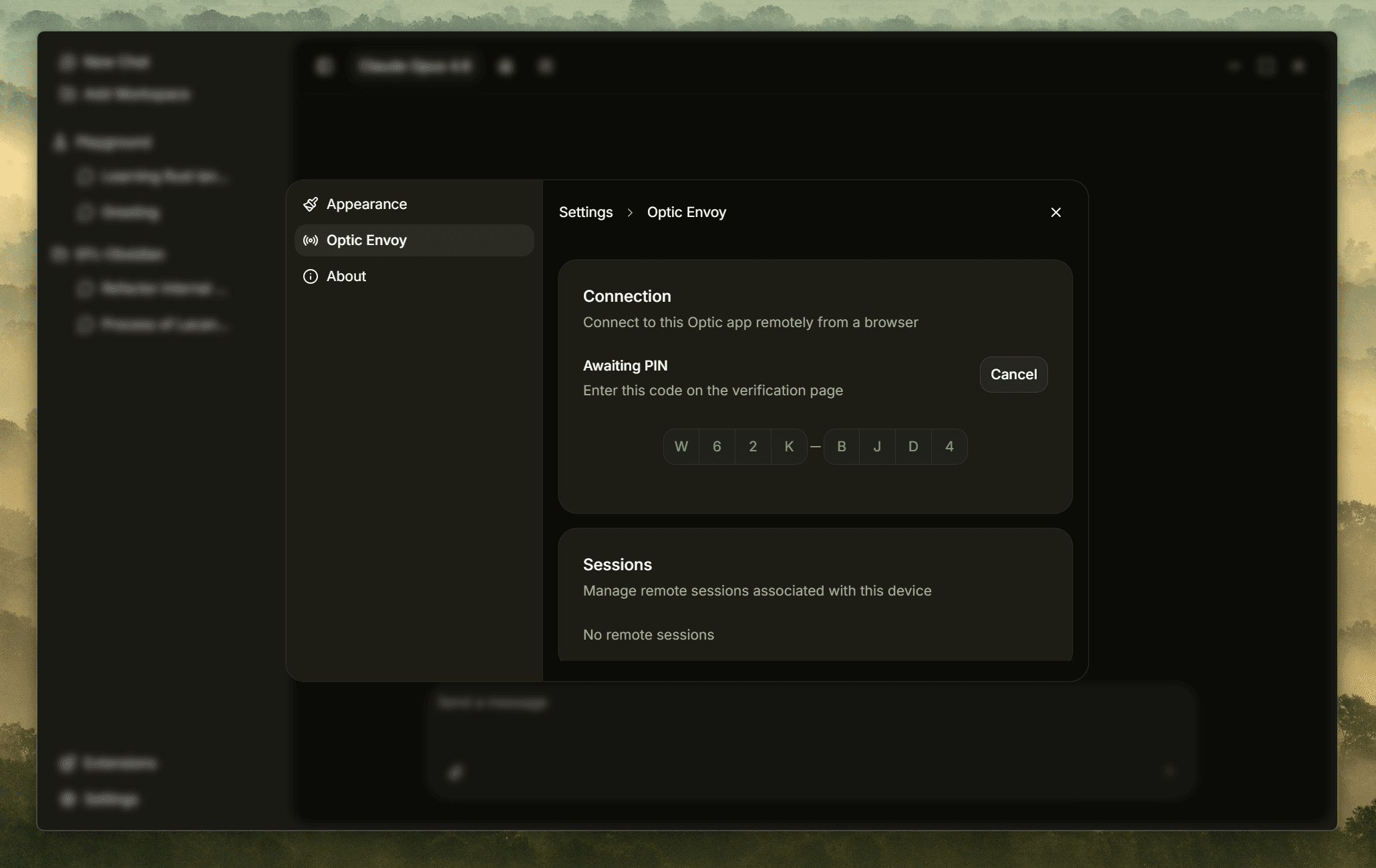

Optic Envoy

Access Optic Desktop from any device via web. Continue your work wherever you are.

Built for Trust

Transparency, security, and privacy at every layer.

Transparent Prompts

Full control over every message the model receives. Support Agent Skills for structured instructions.

Security First

Carefully controlled permissions. No excessive access granted to LLMs by default.

Privacy Focused

Zero telemetry. Connects to our servers only for license activation or latest model info.

Stateless by Design

No memory, no drift. Ensures maximum instruction adherence on every conversation.

Pricing

Pay once, use forever. No subscriptions for core features.

Optic

Launch Discount$10$6Lifetime

Everything you need to get started.

Unlimited conversations

All core features

Unlimited models

Bug report support with guaranteed responses

Optic Pro

Launch Discount$20$12Lifetime

Unlock the full range of Optic capabilities.

Optic Compact: carefully designed context compression method which reduces quota consumption.

Activate on up to 2 devices

Optic Envoy: access from any device via web

Optic Forge: Subagents framework (coming soon)

Run headless binary continiously on NAS, VPS, etc.

Priority support + feature request replies

Full theme customization (coming soon)

Optic Max

$30/ year

The full experience with premium support.

Up to 5 devices, unlimited Envoy connections

One additional Pro license included

All Optic Pro features

Revert to Pro anytime

Optic Specialists — expert LLM consultants

Priority bug reports and feature requests